Up to now, we offered the 1,000 languages initiative and the Common Speech Type with the purpose of creating speech and language applied sciences to be had to billions of customers around the globe. A part of this dedication comes to creating high quality speech synthesis applied sciences, which construct upon initiatives comparable to VDTTS and AudioLM, for customers that talk many alternative languages.

|

After creating a brand new style, one should review whether or not the speech it generates is correct and herbal: the content material should be related to the duty, the pronunciation proper, the tone suitable, and there must be no acoustic artifacts comparable to cracks or signal-correlated noise. Such analysis is a significant bottleneck within the construction of multilingual speech programs.

The most well liked way to review the standard of speech synthesis fashions is human analysis: a text-to-speech (TTS) engineer produces a couple of thousand utterances from the newest style, sends them for human analysis, and receives effects a couple of days later. This analysis section normally comes to listening assessments, right through which dozens of annotators pay attention to the utterances one by one to resolve how herbal they sound. Whilst people are nonetheless unbeaten at detecting whether or not a work of textual content sounds herbal, this procedure may also be impractical â particularly within the early phases of analysis initiatives, when engineers want speedy comments to check and restrategize their means. Human analysis is pricey, time eating, and could also be restricted by way of the supply of raters for the languages of passion.

Every other barrier to development is that other initiatives and establishments normally use more than a few rankings, platforms and protocols, which makes apples-to-apples comparisons unimaginable. On this regard, speech synthesis applied sciences lag in the back of textual content era, the place researchers have lengthy complemented human analysis with computerized metrics comparable to BLEU or, extra not too long ago, BLEURT.

In “SQuId: Measuring Speech Naturalness in Many Languages“, to be offered at ICASSP 2023, we introduce SQuId (Speech High quality Id), a 600M parameter regression style that describes to what extent a work of speech sounds herbal. SQuId is according to mSLAM (a pre-trained speech-text style evolved by way of Google), fine-tuned on over 1,000,000 high quality rankings throughout 42 languages and examined in 65. We display how SQuId can be utilized to enrich human rankings for analysis of many languages. That is the biggest printed effort of this sort thus far.

Comparing TTS with SQuId

The primary speculation in the back of SQuId is that coaching a regression style on in the past accumulated rankings can give us with a cheap approach for assessing the standard of a TTS style. The style can due to this fact be a precious addition to a TTS researcher’s analysis toolbox, offering a near-instant, albeit much less correct choice to human analysis.

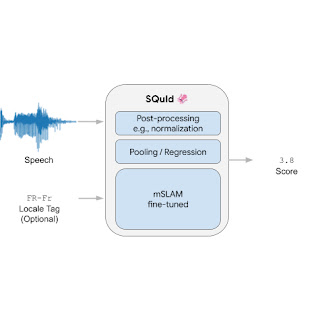

SQuId takes an utterance as enter and an not obligatory locale tag (i.e., a localized variant of a language, comparable to “Brazilian Portuguese” or “British English”). It returns a ranking between 1 and 5 that signifies how herbal the waveform sounds, with a better worth indicating a extra herbal waveform.

Internally, the style comprises 3 parts: (1) an encoder, (2) a pooling / regression layer, and (3) a completely hooked up layer. First, the encoder takes a spectrogram as enter and embeds it right into a smaller 2D matrix that comprises 3,200 vectors of dimension 1,024, the place every vector encodes a time step. The pooling / regression layer aggregates the vectors, appends the locale tag, and feeds the outcome into a completely hooked up layer that returns a ranking. In the end, we observe application-specific post-processing that rescales or normalizes the ranking so it’s inside the [1, 5] vary, which is commonplace for naturalness human rankings. We teach the entire style end-to-end with a regression loss.

The encoder is by way of a ways the biggest and maximum essential piece of the style. We used mSLAM, a pre-existing 600M-parameter Conformer pre-trained on each speech (51 languages) and textual content (101 languages).

|

| The SQuId style. |

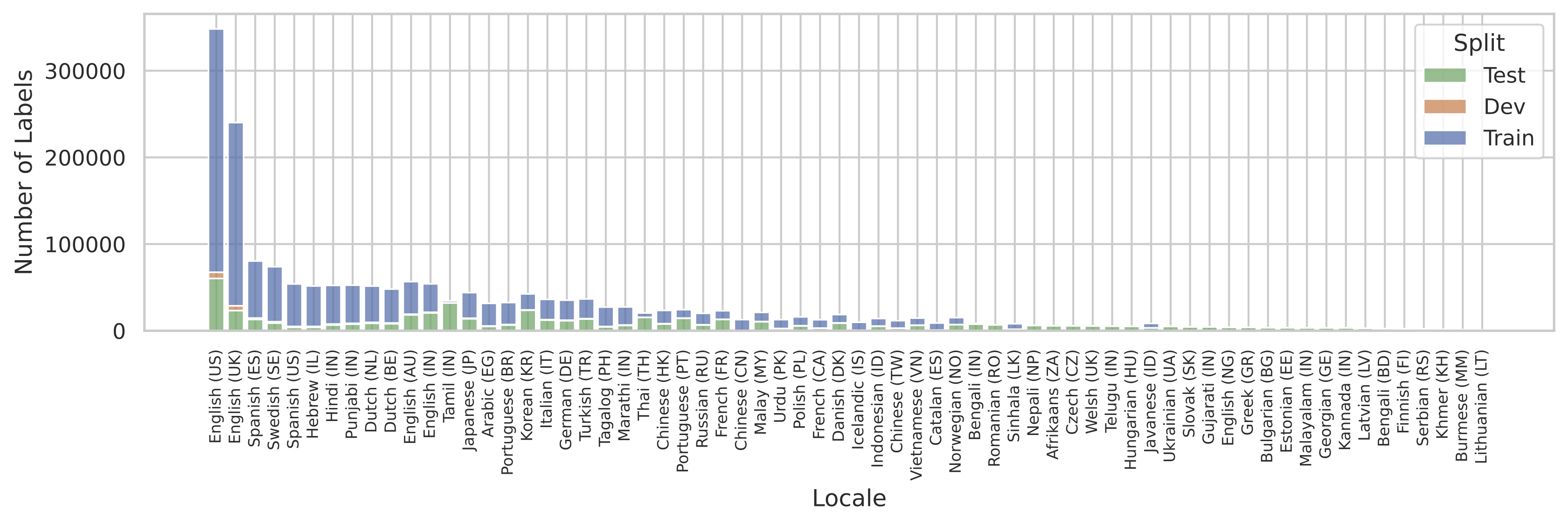

To coach and review the style, we created the SQuId corpus: a selection of 1.9 million rated utterances throughout 66 languages, accumulated for over 2,000 analysis and product TTS initiatives. The SQuId corpus covers a various array of programs, together with concatenative and neural fashions, for a large vary of use instances, comparable to using instructions and digital assistants. Handbook inspection finds that SQuId is uncovered to an unlimited vary of of TTS mistakes, comparable to acoustic artifacts (e.g., cracks and pops), fallacious prosody (e.g., questions with out emerging intonations in English), textual content normalization mistakes (e.g., verbalizing “7/7” as “seven divided by way of sevenâ somewhat than “July 7th”), or pronunciation errors (e.g., verbalizing “difficult” as “toe”).

A commonplace factor that arises when coaching multilingual programs is that the learning information is probably not uniformly to be had for all of the languages of passion. SQuId used to be no exception. The next determine illustrates the dimensions of the corpus for every locale. We see that the distribution is in large part ruled by way of US English.

|

| Locale distribution within the SQuId dataset. |

How are we able to supply excellent efficiency for all languages when there are such diversifications? Impressed by way of earlier paintings on device translation, in addition to previous paintings from the speech literature, we made up our minds to coach one style for all languages, somewhat than the use of separate fashions for every language. The speculation is if the style is huge sufficient, then cross-locale switch can happen: the style’s accuracy on every locale improves on account of collectively coaching at the others. As our experiments display, cross-locale proves to be a formidable driving force of efficiency.

Experimental effects

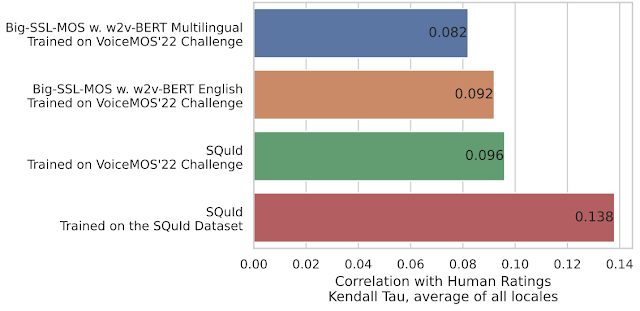

To grasp SQuId’s general efficiency, we examine it to a customized Giant-SSL-MOS style (described within the paper), a aggressive baseline impressed by way of MOS-SSL, a cutting-edge TTS analysis machine. Giant-SSL-MOS is according to w2v-BERT and used to be skilled at the VoiceMOS’22 Problem dataset, the preferred dataset on the time of analysis. We experimented with a number of variants of the style, and located that SQuId is as much as 50.0% extra correct.

|

| SQuId as opposed to cutting-edge baselines. We measure settlement with human rankings the use of the Kendall Tau, the place a better worth represents higher accuracy. |

To grasp the affect of cross-locale switch, we run a chain of ablation research. We range the quantity of locales offered within the coaching set and measure the impact on SQuId’s accuracy. In English, which is already over-represented within the dataset, the impact of including locales is negligible.

|

| SQuId’s efficiency on US English, the use of 1, 8, and 42 locales right through fine-tuning. |

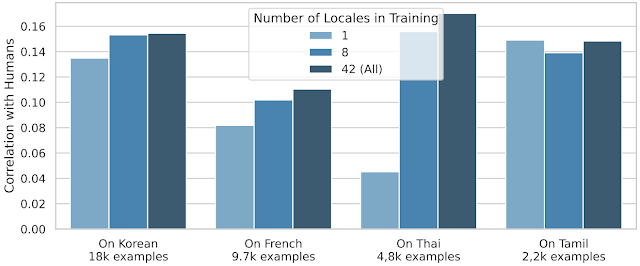

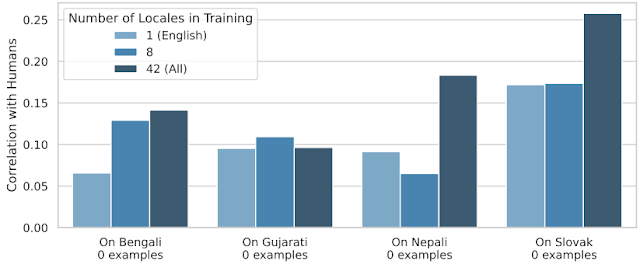

Alternatively, cross-locale switch is a lot more efficient for many different locales:

To push switch to its prohibit, we held 24 locales out right through coaching and used them for trying out completely. Thus, we measure to what extent SQuId can take care of languages that it hasn’t ever observed earlier than. The plot under displays that even if the impact isn’t uniform, cross-locale switch works.

|

| SQuId’s efficiency on 4 “zero-shot” locales; the use of 1, 8, and 42 locales right through fine-tuning. |

When does cross-locale perform, and the way? We provide many extra ablations within the paper, and display that whilst language similarity performs a task (e.g., coaching on Brazilian Portuguese is helping Eu Portuguese) it’s unusually a ways from being the one issue that issues.

Conclusion and long term paintings

We introduce SQuId, a 600M parameter regression style that leverages the SQuId dataset and cross-locale studying to guage speech high quality and describe how herbal it sounds. We display that SQuId can supplement human raters within the analysis of many languages. Long term paintings comprises accuracy enhancements, increasing the variety of languages coated, and tackling new error sorts.

Acknowledgements

The creator of this publish is now a part of Google DeepMind. Many due to all authors of the paper: Ankur Bapna, Joshua Camp, Diana Mackinnon, Ankur P. Parikh, and Jason Riesa.